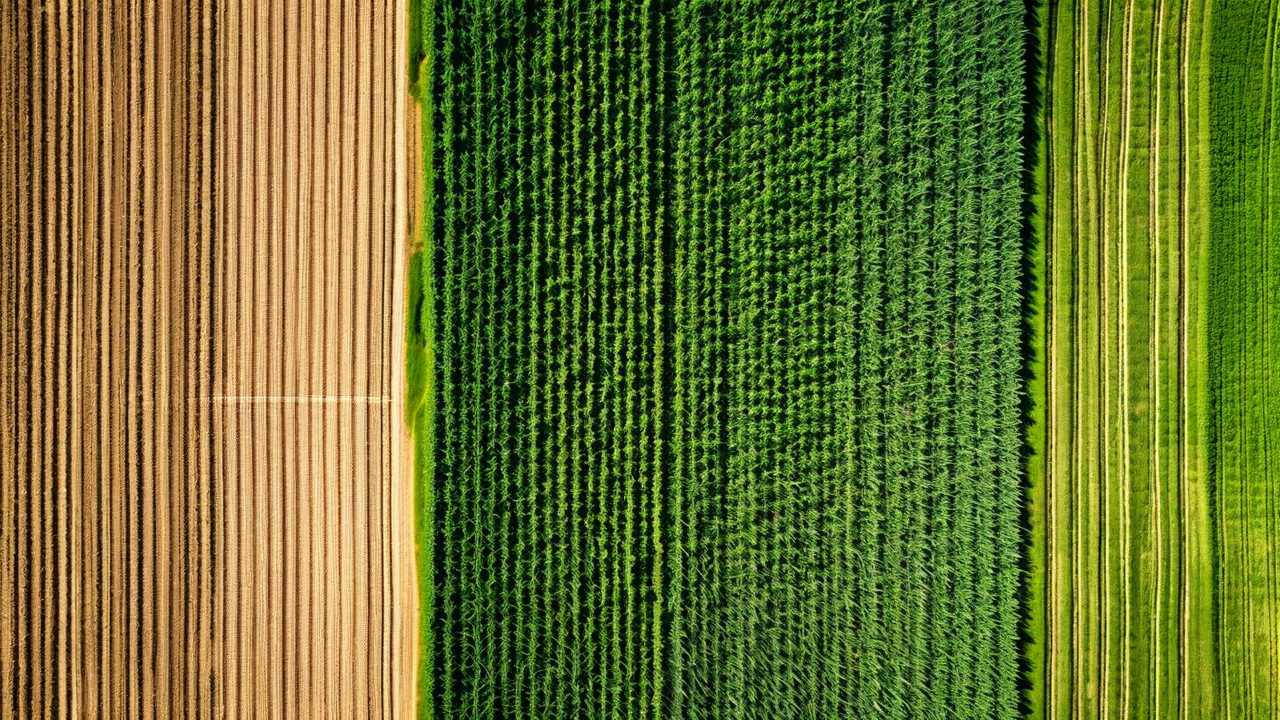

The satellite imagery that most farmers encounter through free tools like Google Earth or USDA's Crop Data Layer is typically a few months to a few years old and has a spatial resolution of 10 to 30 meters per pixel. At 30 meters, each pixel covers about a quarter acre. That is fine for county-level crop mapping and very coarse field boundary detection, but it is not adequate for the kind of within-field variability analysis that drives precision management decisions.

The commercial satellite landscape in 2026 looks different from five years ago. Several constellations now offer multispectral imagery at 3 to 5 meters per pixel at revisit intervals of 3 to 5 days globally, with priority tasking options for even higher revisit frequency. Some operators can access 50-centimeter to 1-meter imagery on a daily basis for specific fields of interest. The resolution and frequency improvements are not incremental. They represent a fundamentally different capability for in-season monitoring.

What Resolution Actually Changes

At 30 meters per pixel, a 160-acre field is represented by roughly 240 pixels. At 5 meters per pixel, the same field is 23,500 pixels. At 1 meter, it is 586,000 pixels. That difference in data density is not just about making prettier maps. It changes what you can detect, what management zones you can delineate, and how precisely you can map a developing problem.

The practical threshold for within-field management zone mapping is around 5 to 10 meters per pixel. At that resolution, management zones of 2 to 5 acres are detectable and mappable. Smaller stress features, like an isolated compaction zone or a drainage problem from a failed tile segment, can be down to 0.5 acres and still show up clearly in the imagery rather than being averaged out into surrounding pixels.

Consider a gray leaf spot outbreak that starts in a 3-acre low-lying area in the northeast corner of a field after a foggy stretch of weather in late July. At 30-meter resolution, that 3-acre affected area covers roughly 12 pixels. The spectral signal is real but easily confused with adjacent soil variation or shadowing. At 5-meter resolution, the same area covers 485 pixels with a clear geometric pattern matching the low topographic position. The analyst can locate it precisely and direct a scout to confirm it that afternoon.

Revisit Frequency and the Disease Detection Window

Spatial resolution matters, but for in-season crop monitoring, revisit frequency might matter more. The window between when a disease outbreak becomes detectable from satellite and when it is causing significant yield loss is narrow. For gray leaf spot in corn, the detectable signature appears about 7 to 10 days after initial infection under favorable conditions. Yield impact becomes significant around 14 to 21 days post-infection if untreated. That gives a detection-to-intervention window of roughly 5 to 10 days.

A satellite with a 14-day revisit interval might miss that window entirely. A pass on day 3 post-infection shows nothing. The next pass on day 17 shows an outbreak that is already past economical fungicide timing. A 3-day revisit interval catches the developing signature between days 7 and 10, when intervention is still effective.

Our internal analysis across 1,200 fields monitored over the past two seasons showed that fields monitored with 3-day or shorter revisit intervals had fungicide applications applied at more economically optimal timing in 84% of detected disease events. Fields monitored with 7-day or longer revisit intervals had optimal-timing applications in 61% of detected disease events. The 23-percentage-point gap in optimal timing translates to an average difference of $14.50 per acre in yield protection value for treated fields, based on our disease response models.

Cloud Cover Remains a Real Constraint

Higher resolution and more satellites do not solve the cloud problem. Optical sensors cannot image through clouds, and cloud cover in the Corn Belt during the growing season is frequent enough that guaranteed 3-day revisit intervals are often not achievable in practice. During June through August in Iowa and Illinois, cloud-free imagery at a specific field location averages 2 to 3 passes per week in a good year and 1 to 1.5 passes per week in a wet year.

The practical solution is data fusion across multiple satellites with different orbital parameters and timing. When four or five satellites with different ground track timings are tasked over the same area, the probability of a cloud-free pass on any given 3-day window increases substantially. CropMind uses imagery from multiple commercial constellation sources and fuses it into a composite product that reduces cloud coverage gaps. We also integrate synthetic aperture radar data, which does penetrate clouds, for vegetation index estimation during extended cloud cover periods, though SAR-derived indices have lower accuracy than optical multispectral for crop health assessment.

What Higher Resolution Enables for Management Zone Analysis

Beyond disease detection, higher-resolution multispectral data changes what is possible for management zone delineation at the start of a season. A 5-meter NDRE image from early-season satellite passes, combined with historical yield data and soil sampling, produces management zone boundaries that are accurate to within 1 to 2 field rows rather than the 50 to 100-foot boundaries typical of 30-meter imagery analysis.

This matters for planter control systems that execute variable-rate prescriptions. A planter with a row-by-row control system executing a variable-rate seeding prescription derived from 5-meter zone boundaries wastes fewer transition rows at zone boundaries than one executing a prescription from 30-meter zone boundaries. The row-exact zone transition is only practical when the underlying zone map is accurate at that scale. The satellite resolution improvement makes that accuracy achievable without supplementary drone flights over the entire farm.

Cost Implications

Higher-resolution imagery costs more per acre. The business case depends on what that resolution enables. For operations already enrolled in a monitoring program using coarser imagery, the upgrade to 5-meter multispectral typically adds $3 to $6 per acre to imagery cost but generates additional decision value in disease detection timing and management zone precision that exceeds that cost for operations above roughly 500 acres actively managing multiple crops.

For smaller operations or operations where disease management is less critical, the coarser imagery may be the right cost-benefit choice. The technology being available at higher resolution does not mean every farm needs to pay for it. We try to match resolution tiers to the management practices and crop types of each farm in our network rather than defaulting to the highest specification across the board.

See What Current Satellite Data Shows for Your Fields

We can pull a current multi-spectral composite for any field in the continental US and walk you through what the data shows. Request a demo and tell us which fields you want to start with.

Request a Demo